Neural Networks Learning

| Coursera machine learning exercise 4.pdf | |

| File Size: | 356 kb |

| File Type: | |

Backpropagation

|

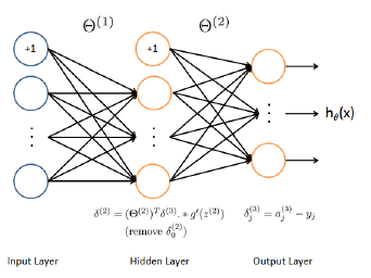

For each node j in layer l ,we would like to compute an "error term" that measures how much that node was "responsible" for any errors in our output.

For an output node, the difference between the network's activation and the true target value can be directly measured, which is used to define the output error. For the hidden units, the errors are computed based on a weighted average of the error terms of the nodes in the next layer. Detailed steps for backpropagation: page 9 of exercise 4 Cost function with regularization and backpropagation with regularization added:

| |||||||